Table of Contents

Kimi AI, developed by Moonshot AI, represents a paradigm shift in large language models with its

1 trillion parameter Mixture-of-Experts architecture that activates only 32 billion parameters per query,

delivering exceptional efficiency alongside state-of-the-art performance.

Kimi AI is a state-of-the-art artificial intelligence system developed by Moonshot AI (月之暗面科技有限公司),

a Beijing-based startup founded in March 2023 by Yang Zhilin, a distinguished alumnus of Tsinghua University and former researcher at Baidu and Google

[271]

[277].

The company has rapidly emerged as a significant player in the global AI landscape, with a strategic focus on creating advanced, open-weight large language models that excel in agentic intelligence, complex reasoning, and real-world task execution.

Kimi K2 has demonstrated exceptional performance across industry-standard benchmarks, often surpassing leading models from OpenAI, Anthropic, and Meta.

Its performance on benchmarks such as SWE-Bench (65.8%),

LiveCodeBench (53.7%), and Humanity's Last Exam (44.9% with tools)

highlights its advanced problem-solving and tool-use abilities

[476]

[478].

The emergence of Kimi K2 has profound strategic implications for the AI search and assistant landscape, signaling a move towards more specialized, agentic, and open models.

Unlike traditional search engines or general-purpose chatbots, Kimi K2 is designed to be an active agent that can interact with its environment, use tools, and complete complex tasks

[499].

Executive Summary

Key Insights

Overview

Performance Excellence

Strategic Implications

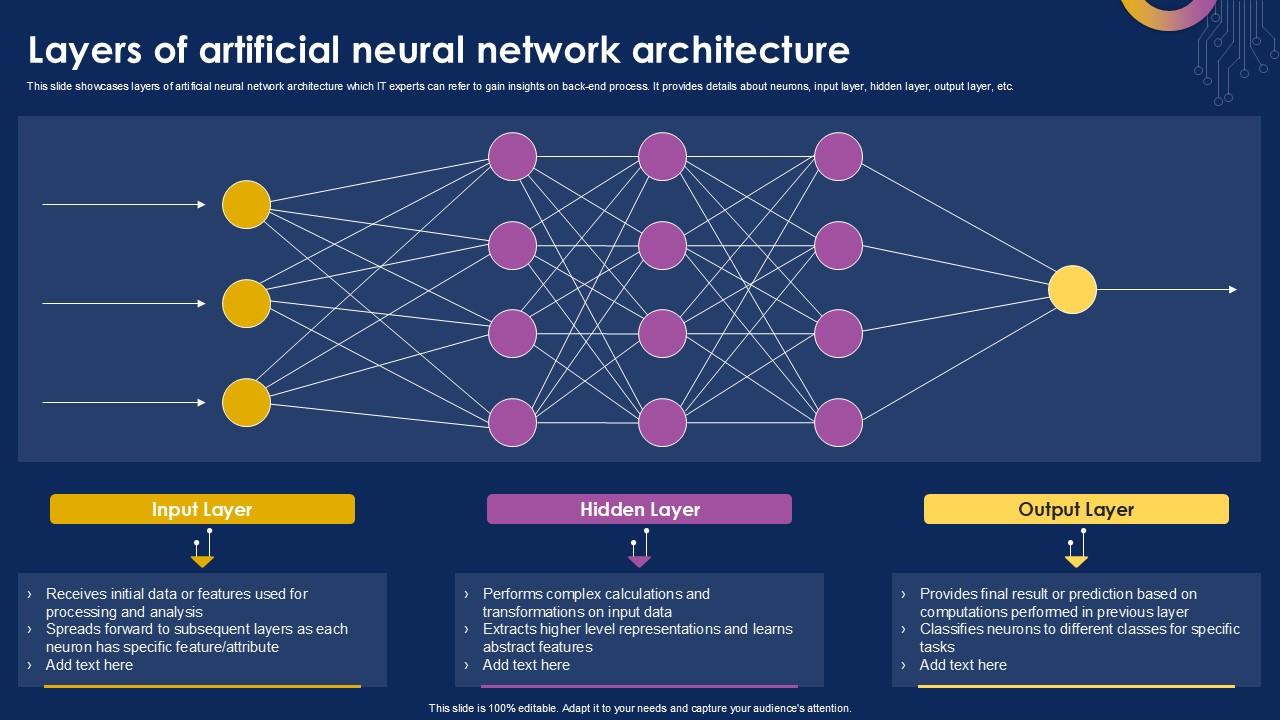

The technical foundation of Kimi K2 is built upon a sophisticated Mixture-of-Experts (MoE) architecture,

a design choice that enables the model to achieve a remarkable balance between immense scale and computational efficiency.

This architecture is a significant departure from traditional dense models, where all parameters are active during every computation.

The core innovation of the MoE architecture lies in its dynamic expert activation mechanism.

This system intelligently routes each input to a select group of specialized "expert" sub-networks within the model,

ensuring that the most relevant knowledge and computational resources are applied to the task at hand.

Dynamic gating network selects optimal experts Domain-specific sub-networks for optimal performance Sparse activation reduces computational overhead

Kimi K2 employs a Multi-head Latent Attention (MLA) mechanism,

specifically designed to improve inference efficiency and enable the processing of long sequences of text.

The model's ability to handle long-context windows enables sophisticated applications such as:

Technical Architecture

Mixture-of-Experts (MoE) Model Design

Scale and Efficiency

Dynamic Expert Activation

Intelligent Routing

Specialized Experts

Efficient Computation

Advanced Attention Mechanisms

Multi-head Latent Attention (MLA)

Long-Context Handling

Massive-scale unsupervised learning on diverse corpus including scientific literature, technical documentation, and open-source code repositories.

Novel optimization algorithm with QK-clip technology ensures stable training at unprecedented scale,

enabling training on 15.5 trillion tokens without any loss spikes

[483].

Human evaluations guide model alignment with preferences for helpfulness, accuracy, and safety. Specialized training for tool use, web browsing, and complex multi-step task execution.

A defining feature of Kimi K2 is its "agentic" nature, which enables it to go beyond simple question-answering

and actively perform tasks on behalf of the user through sophisticated integration with external tools.

Access up-to-date information and perform research Write, test, and debug code autonomously Query and analyze structured data sources

Kimi K2 implements an episodic memory system that allows it to store and retrieve information

from past interactions in a structured and efficient manner, enabling long-term context understanding.

Breaks down high-level tasks into manageable sub-tasks Coordinates multiple tools for complex workflows Maintains task context across multiple interactionsCore Algorithms and Implementation

Multi-Stage Training Pipeline

Pre-training Phase

MuonClip Optimizer

Post-training Phase

Reinforcement Learning from Human Feedback (RLHF)

Agentic Capabilities Training

Agentic AI and Tool Integration

Real-time Web Search

Code Execution

Database Queries

Memory and Context Management

Episodic Memory System

Multi-turn Reasoning

Complex Task Decomposition

Sequential Tool Orchestration

Context-Aware Execution

Kimi K2 has consistently demonstrated its ability to outperform leading models from OpenAI, including GPT-4.1 and GPT-4o,

on a variety of key benchmarks. This success challenges the notion that only closed-source models can reach the pinnacle of AI performance.

Exceptional results on LiveCodeBench and SWE-Bench Strong foundation in STEM subjects Superior tool use and multi-step reasoning Performance Evaluation and Benchmarks

Superior Performance in Coding and Reasoning

Excellence in Mathematical and General Knowledge

Comparative Analysis with Leading Models

Key Advantages

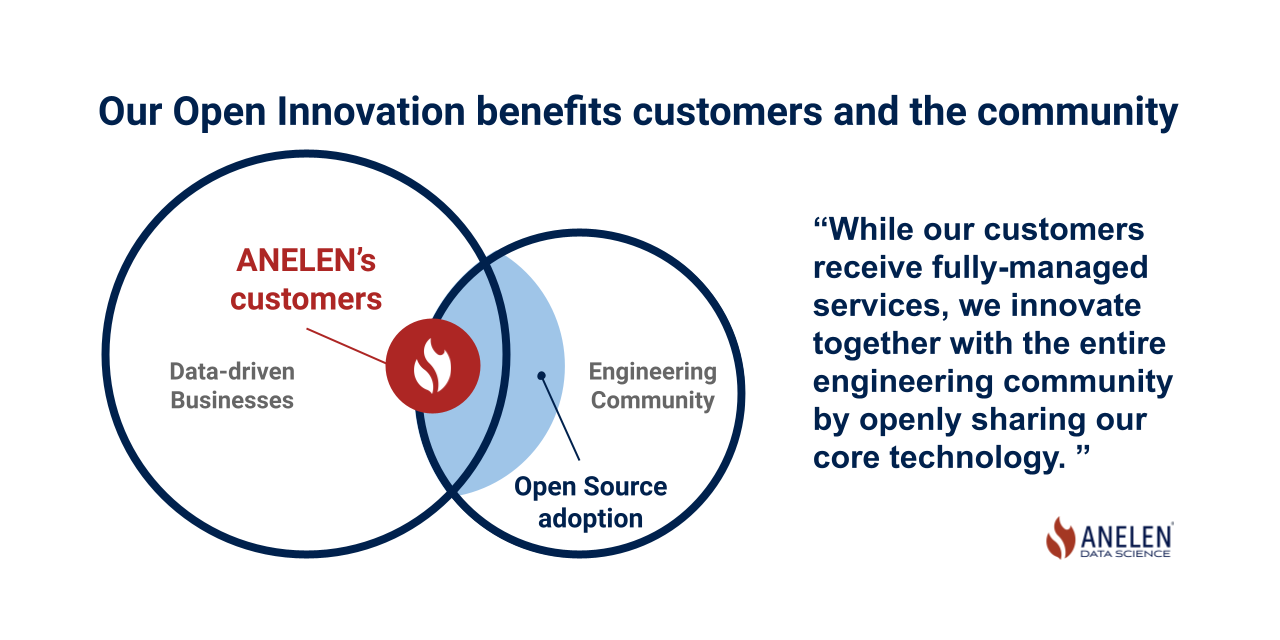

Moonshot AI's decision to release Kimi K2 as an open-weight model under a permissive license

is a key part of its market strategy and a major differentiator from many of its competitors.

This approach fosters a vibrant developer ecosystem and drives research innovation.

Founded by Yang Zhilin, prominent AI researcher with PhD from Carnegie Mellon University

and experience at Google Brain and Facebook AI Research

[421]

[426].

Building foundational models that pave the way to Artificial General Intelligence

while democratizing access to powerful AI tools.

Market Potential and Strategic Positioning

Open-Weight and Open-Source Strategy

Strategic Benefits

Competitive and Disruptive Pricing

Foundational Strengths of Moonshot AI

Leadership Excellence

Vision for AGI

Single powerful Mixture-of-Experts model with 1T parameters, activating 32B per query Multi-model approach using various providers (OpenAI, Anthropic, Meta) Complex multi-step tasks with tool integration and autonomous execution Up-to-date information with source citation and web integration Open-weight model fostering community development and ecosystem growth Closed-source model with subscription-based business model

Execute complex multi-step tasks autonomously without human intervention

[476].

Comparative Analysis with Other AI Search Tools

Kimi AI vs. Perplexity AI

Architecture

Kimi AI

Perplexity AI

Performance Focus

Agentic Reasoning

Real-time Retrieval

Market Strategy

Open-Source

Proprietary

Kimi AI vs. Other Major AI Models

Unique Selling Proposition

Long Context Processing

Agentic Capabilities

Competitive Advantages in Programming

Accelerated development cycles, automated testing, enhanced code quality Rapid literature review, hypothesis generation, data analysis automation Process automation, decision support, knowledge management Applications and Use Cases

Professional and Enterprise Applications

Advanced Programming Assistant

Data Analysis & Business Intelligence

Research & Document Analysis

Enterprise Adoption Benefits

Technical Advantages

Business Benefits

Consumer and Educational Use

Personal AI Assistant

Educational Tutor

Creative Content Generation

Transformative Impact Across Industries

Industry Transformation

Software Development

Research & Academia

Business Operations

References